Last update: 13 Nov 2021

Nowadays a webcam is probably the most versatile input device, especially in conjunction with underlying machine learning processing. A camera can be used to track physical objects or faces but it can also recognize body movements and even hands.

As of today, Google's MediaPipe seems to be the most powerful framework in terms of detection/tracking features and APIs.

7.1 Frameworks

In this section we review some more current frameworks.

Also consider the software framework Wekinator when implementing gesture-based interaction.

7.1.1 MediaPipe (Google)

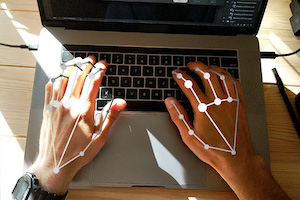

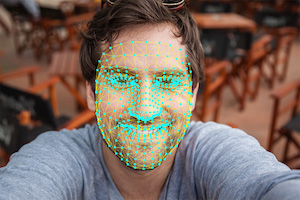

MediaPipe is a machine learning (ML) based framework, developed by Google, for face, hand and body tracking.

(Image sources: MediaPipe website)

The framework offers APIs for the following eco-systems:

- Python

- JavaScript

- Android

- iOS

The best starting point is the GitHub.io documentation. For a first impression you can look at one of the web demos that run directly in your browser: MediaPipe on CodePen.

7.1.2 Handsfree.js

Handsfree.js is a JavaScript framework for tracking hands, face and body. This framework is specifically designed to run in a simple browser and was used in the student project FACECON

7.1.3 Others

Two more frameworks:

- TSPS is a cross-platform Toolkit for Sensing People in Spaces (I recommend to download version 1.3.7 or higher when working with a Mac)

- OpenFace is a Python and Torch implementation of face recognition with deep neural networks and is based on the CVPR 2015 paper "FaceNet: A Unified Embedding for Face Recognition and Clustering" by Florian Schroff, Dmitry Kalenichenko, and James Philbin at Google

7.2 Scenarios

Three scenarios are specifically interesting in conjunction with camera-based sensing: tangible, proxemic and facial interaction.

7.2.1 Tangible User Interfaces (TUI)

A tangible user interface (TUI) uses physical objects to interact with digital information. One way to recognize and track physical object is to attach markers on these objects that are easy to recognize. Such markers are also called fiducial markers.

TUIs were introduced by Prof. Hiroshi Ishii who heads the Tangible Media Group at the MIT Media Lab. The milestone paper on this topic is the following (for more current publications see the group's homepage):

Hiroshi Ishii and Brygg Ullmer (1997) Tangible bits: towards seamless interfaces between people, bits and atoms. In Proceedings of the ACM SIGCHI Conference on Human factors in computing systems (CHI '97). ACM, New York, NY, USA, 234-241.

Student Projects

Check out our student projects using tangibles:

- Landscape Generator: Generating (random) virtual landscapes with physical dice

- Tangible Cube: Controlling music with a simple cube

- 3D Objects Rotation: An exploration of how to best rotate a simple 3D object using a physical object (sensing is not camera-based)

- BenutzBar: A cocktail bar interface with tangible objects

- TIM - Tangible Interaction Menu: Using a tangible object for menu selection in AR

7.2.2 Proxemic Interaction

In proxemic interaction the central idea is to transfer mechanisms from human-human communication to human-computer interaction. Specifically, humans use spatial positioning and orientation between other humans to adjust their communicative behavior. Read the excellent online article Proxemic Interactions by Saul Greenberg et al. (2011) to get to know more about this research direction.

A milestone paper is

Ballendat, T., Marquardt, N. and Greenberg, S. (2010) Proxemic interaction: Designing for a proximity and orientation-aware environment. In: Proc. of the ACM International Conference on Interactive Tabletops and Surfaces 2010, ACM, New York.

Student Projects

Previous student projects often use the Kinect for sensing:

- NINGU: Alternative, gesture-based interaction technique for slide-based presentations

- Occlusion-Aware Presenter: Slide software that adjusts to the position of the presenter

7.2.3 Facial Expressions for Interaction

Facial expression is a relevant interaction modality because it requires little effort to change facial expression and it can be performed in parallel to manual activities like moving the mouse or performing a gesture. On the downside, facial expressions can lead to social awkwardness when done in public. Moreover, one has to be careful to ensure that no unintentional actions are triggered.

Student Projects

Check out various student projects using camera input, and specifically facial expression, for interaction:

- PICaFACE: Controlling camera features with facial expressions

- faceTYPE: Formatting text with face gestures

- Facial Call Skyping: Controlling video conferencing using face gestures

7.3 OpenCV for Processing

OpenCV is a powerful "computer vision" library developed by Intel. Computer vision is the science of extracting meaningful information from images or video.

Originally developed in C++, there is a port for Processing called OpenCV for Processing

For interaction, the following functionality may be interesting:

FaceDetection: Allows to identify a face in the frame. This becomes more unreliable when the person is not directly facing the camera.

BrightestPoint: Detects the brightest point in the frame. Can be used to interact with a small flashlight.

BackgroundSubtraction: Detects moving objects by comparing the current image frame with the previous one and subtracting pixels. Works with a static camera and constant light conditions.

7.4 Fiducial Marker Tracking with reacTIVision

Martin Kaltenbrunner developed a system called reacTIVision which provides such a recognition and tracking system. See https://github.com/mkalten/reacTIVision or have a look at this paper (click title to open):

Martin Kaltenbrunner and Ross Bencina (2007) reacTIVision: a computer-vision framework for table-based tangible interaction. In Proceedings of the 1st international conference on Tangible and embedded interaction (TEI '07). ACM, New York, NY, USA, 69-74.

Components

You need the following components to make this work:

- printed markers (on paper)

- a software for tracking markers with a camera

- a software for receiving the positions/orientations of the markers

You find everything here: http://reactivision.sourceforge.net

Markers and Tracking App

Download the reacTIVision software for

- markers as PDF and

- the tracking software (an .exe or .app file)

Then, try it out: Print out one page of markers, start the software and hold the markers in front of your notebook camera. You should see green numbers (IDs) on top of the markers.

Client

The reacTIVision application sends the position/orientation of the tracked markers to any TUIO client that is listening on port 3333. You can simply download and install a client, e.g. for Processing, and start it. When your reacTIVision app is running and is tracking some markers you should see something on your TUIO client app. You find the client also under the above sourceforge link.

Note for the Processing client: You install a Processing library by moving the directory from the downloaded ZIP file (called TUIO) to your Processing sketchbook (a folder on your hard drive). If you are not sure where this is located, open your Processing preferences where you can look up the path.

7.5 Facial Expression Recognition with FaceOSC

FaceOSC is a tool that recognizes your face and puts a 3D mesh over it so that mouth shape, eyebrows and other features can be recognized. As the name implies the tool sends recognized key values via OSC to other tools (like Processing). Here's an example of what you can do:

Here's another example: the student project faceTYPE by Alice Strunkmann‐Meister and Rodrigo Blásquez at Augsburg University of Applied Sciences.

Installation

FaceOSC work under Windows and on Macs. You will have to run a separate program (FaceOSC) that performs the recognition and then sends the data to a Processing sketch (FaceOSCReceiver).

For installation, do the following:

- Install FaceOSC

- From the releases, pick the file for your OS (e.g. FaceOSC-v1.11-win.zip or FaceOSC-v1.1-osx.zip)

- Unpack the ZIP file

- You should find an executable, e.g. bin/FaceOSC.exe for Windows

- Install Processing 3

- In Processing, install the library oscP5 (under Sketch > import library)

- Go to the FaceOSC-Templates project and - in the processing folder - download FaceOSCReceiver

Starting Recognition

To start the recognition process, do the following:

- Start FaceOSC (not in processing but by clicking e.g. on the exe file in Windows): you will see a window with your camera screen and - if a face is present - a mesh over the face.

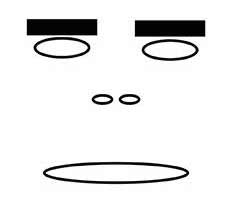

- In Processing, open and start FaceOSCReceiver: you will see a stylized face that mimics your facial movements

This is what Processing shows you:

Now you can think up all kinds of actions and features that you control with your eyebrows or mouth.

Here's a list of signals that you receive on the Processing side (from FaceOSCReceiver):

public void found(int i)

public void poseScale(float s)

public void posePosition(float x, float y)

public void poseOrientation(float x, float y, float z)

public void mouthWidthReceived(float w)

public void mouthHeightReceived(float h)

public void eyeLeftReceived(float f)

public void eyeRightReceived(float f)

public void eyebrowLeftReceived(float f)

public void eyebrowRightReceived(float f)

public void jawReceived(float f)

public void nostrilsReceived(float f)