Microsoft’s Kinect sensor can recognize human bodies and track the movement of body joints in 3D space. In 2019 an entirely new version called Azure Kinect DK was released by Microsoft. It is the third major version of the Kinect.

Originally, the Kinect was released 2010 (version 1, Xbox) and 2013 (version 2, Xbox One) but production was discontinued in 2017. However, Kinect technology was integrated for gesture control in the HoloLens (2016). While the Kinect failed to become a mainstream gaming controller, it was widely used for research and prototyping in the area of human-computer interaction.

In early 2022 we acquired the new Azure Kinect for the Interaction Engineering course at the cost of around 750 € here in Germany.

Setting up the Kinect

The camera has two cables, a power supply and a USB connection to a PC. You have to download an install two software packages:

- Azure Kinect SDK

- Azure Kinect Body Tracking SDK

It feels a bit archaic because you need to run executables in the console. For instance, it is recommended that you perform a firmware update on the sensor. For this, go into the directory of the Azure Kinect SDK and call “AzureKinectFirmwareTool.exe -Update <path to firmware>”. The firmware is in another directory of this package.

As a next step you go into the Azure Kinect Body Tracking SDK directory where you can start the 3D viewer. Again, this has one parameter so you cannot just click it in the file explorer. Type “k4abt_simple_3d_viewer.exe CPU” or “k4abt_simple_3d_viewer.exe CUDA” to start the viewer (in the /tools directory).

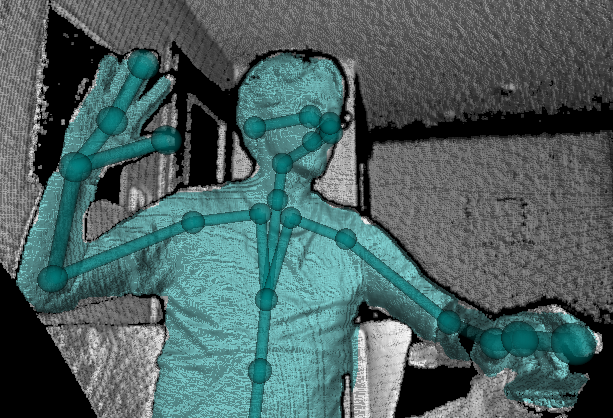

This is what you see (with the CPU version this is very slow).

Differences between Kinect versions

The new Kinect obviously improves on various aspects of the older ones. The two most relevant aspects are the field of view (how wide angled is the camera view) and the number of skeleton joints that are reconstructed.

| Feature | Kinect 1 | Kinect 2 | Kinect 3 | |

| Camera resolution | 640×480 | 1920×1080 | 3840×2160 | |

| Depth camera | 320×240 | 512×424 | 640×576 (narrow) 512×512 (wide) | |

| Field of view | H: 57° V: 43° | H: 70° V: 60° | H: 75° (narrow) V: 65° (narrow) H: 120° (wide) V: 120° (wide) | |

| Skeleton joints | 20 | 26 | 32 |

There is an open-access publication dedicated to the comparison between the three Kinects:

Michal Tölgyessy, Martin Dekan, Ľuboš Chovanec and Peter Hubinský (2021) Evaluation of the Azure Kinect and Its Comparison to Kinect V1 and Kinect V2. In: Sensors 21 (2). Download here.

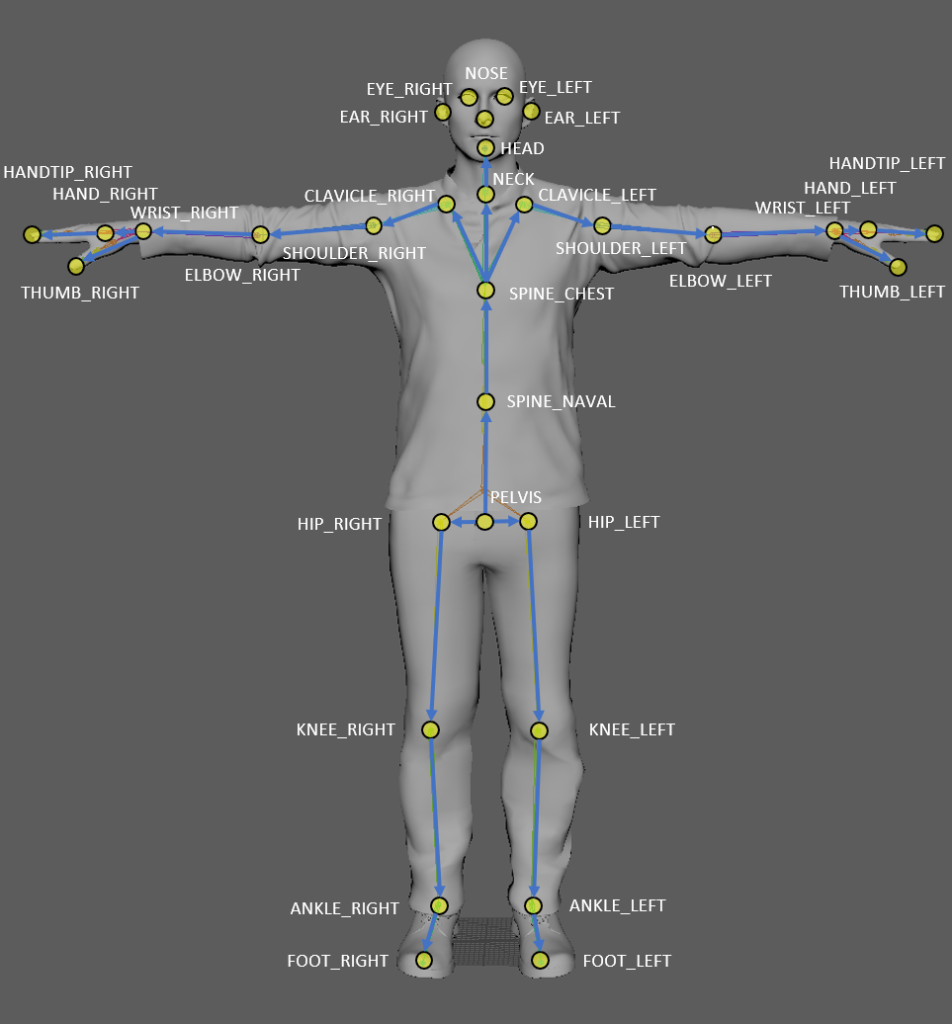

Skeleton

Here is a schematic view of the joints that are recognized. In practice it turns out one has to put special attention to the robustness of the signal concerning hands, feet and also head orientation.

To integrate the Kinect with a JavaScript program, e.g. using p5js, I recommend looking at the Kinectron project.

Links

Microsoft’s Azure Kinect product page

Azure Kinect documentation page

Course chapter on the Kinect (Interaction Engineering) in German

Wikipedia on the Kinect (very informative)

For developers

Kinectron (JavaScript, including p5js)

Azure Kinect DK Code Samples Repository

Azure Kinect Library for Node / Electron (JavaScript)

Azure Kinect for Python (Python 3)